April 2026’s reports of seismic activity and tsunami warnings in Japan have again highlighted how critical early warning systems are. Events like these reinforce a consistent reality: detection is only part of the system. The ability to communicate alerts quickly and reliably remains central to reducing impact.

As landslide, earthquake and tsunami monitoring systems evolve, this communications challenge is becoming more complex. Monitoring is moving beyond single parameter approaches toward multi-sensor systems that integrate different data types to improve situational awareness and reduce false positives. At the same time, research institutions are applying machine learning and deep learning techniques to identify patterns that may be difficult to detect through rule-based models alone.

These developments increase system capability, but they also change system requirements. More sensors generate more data. AI-driven approaches require datasets that are larger, more continuous and better contextualized. As a result, monitoring system design now has to account not only for detection, but also for power, data volume, transmission frequency, and the role of processing at the edge.

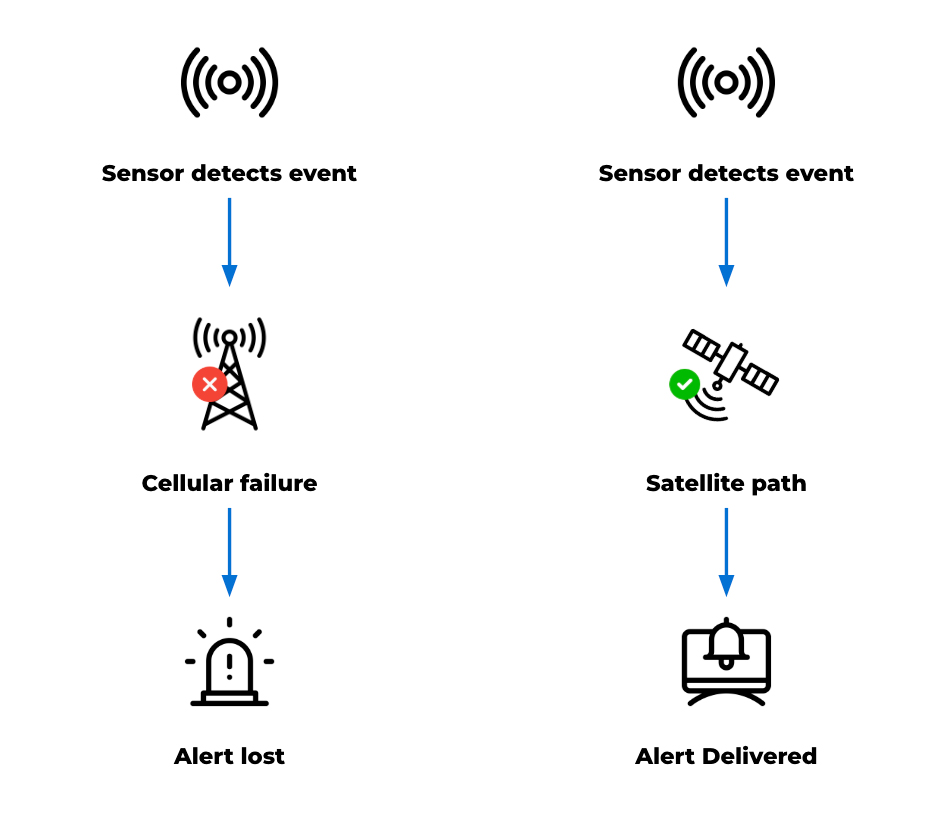

Detection is only useful if the alert gets through

Detection capability has improved significantly, and monitoring systems can often identify early signs of instability. But detection only matters if alerts reach the right people in time. That remains difficult in remote terrain. Monitoring sites are often located where infrastructure is limited, ground conditions are unstable, and access is restricted. Power depends on what the natural landscape allows, while cellular networks may be unavailable, unreliable, or vulnerable during an event.

As a result, a system can continue collecting data even when its communications path fails. This creates a gap between detection and action, reducing the value of the system no matter how capable the sensing layer is. International frameworks on early warning systems highlight that coverage is improving globally, but reliability and last-mile delivery remain key challenges.

Satellite connectivity can help close that gap. Because it does not rely on local infrastructure, it provides an independent communications path for remote or vulnerable locations. Ground Control’s earlier work in tsunami early warning systems in Thailand demonstrates how satellite connectivity can support resilience and last-mile data delivery, helping ensure that critical alerts can reach emergency response systems when local infrastructure is limited or unavailable.

Natural hazard monitoring is becoming more data intensive

As scientific research continues to evolve, natural hazard monitoring systems can generate a broad range of data. Traditional threshold-based systems typically produce discrete, event driven messages. Multi sensor deployments and research programs, by contrast, may generate continuous and contextual datasets that support analysis, model development, and validation. Within a single monitoring system, data may include:

- Time critical alerts

- Ongoing telemetry

- Device and system health data

- Larger datasets used for analysis and research

- Photographic, mapping, audio, or video data.

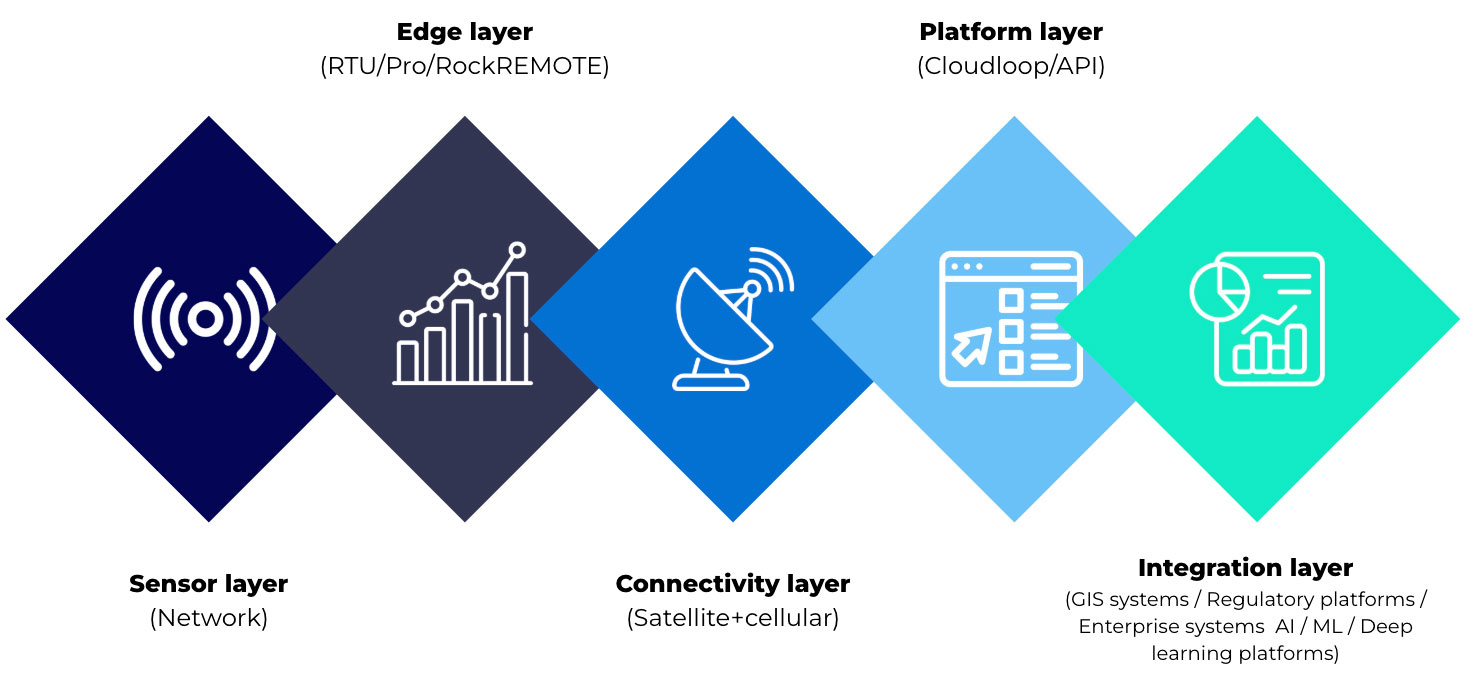

Modern systems may combine LoRaWAN sensor networks, remote sensing methods such as radar, terrestrial and non-terrestrial communications, and both edge and cloud processing. Satellite devices are introduced into these systems to address coverage gaps, provide an independent communication path, or support resilience where terrestrial networks are limited.

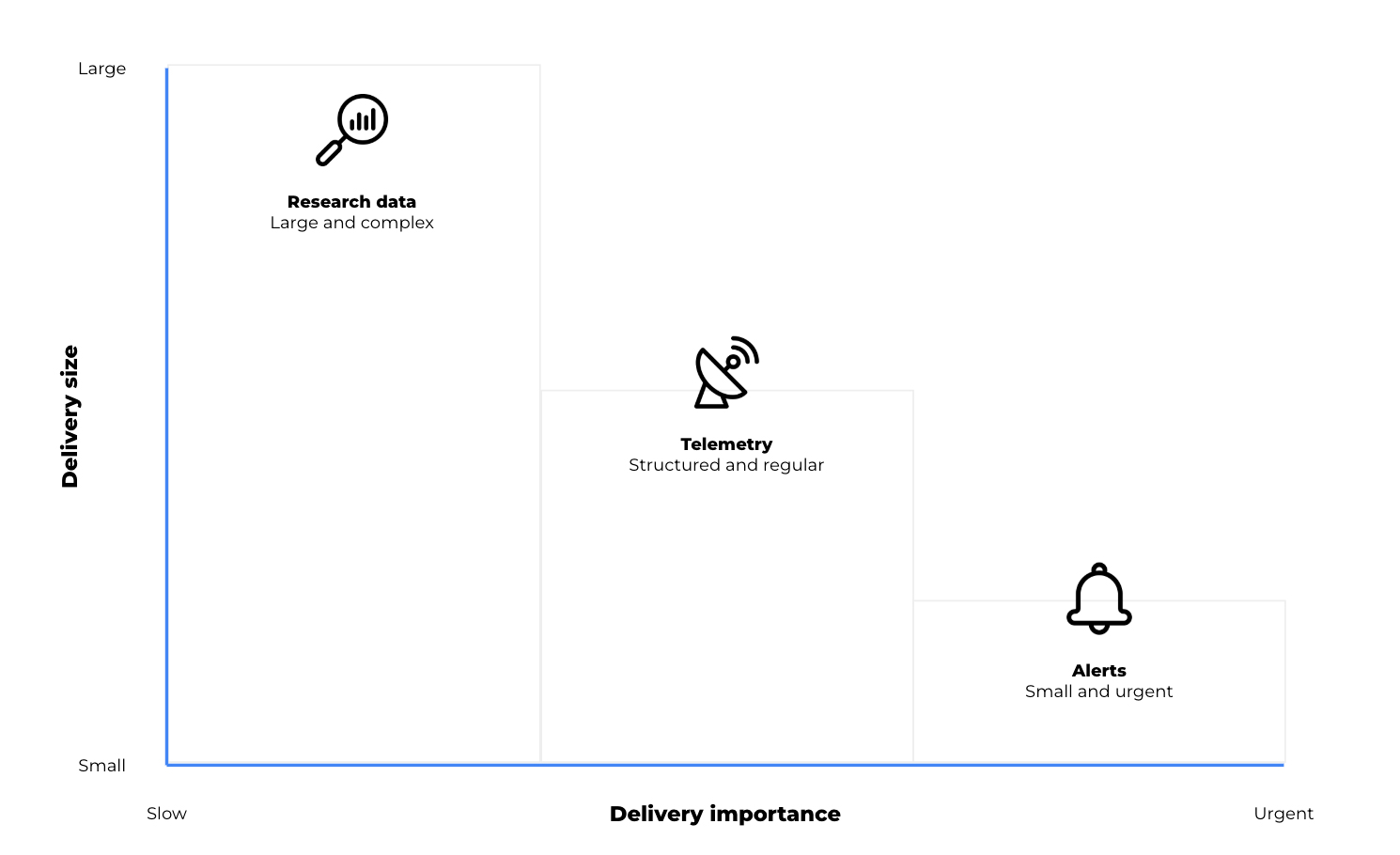

The key point is that not all data behaves in the same way. A short emergency alert has very different requirements from periodic telemetry or a large dataset used for research. In practice, satellite IoT devices can support different roles depending on the size, urgency and value of the data being transmitted.

Three types of data, three connectivity roles

In earthquake, landslide and tsunami monitoring, the connectivity question encompasses what kind of data needs to move, how urgently it needs to move, and how much processing should happen before it leaves the site. Broadly, data requirements fall into three categories:

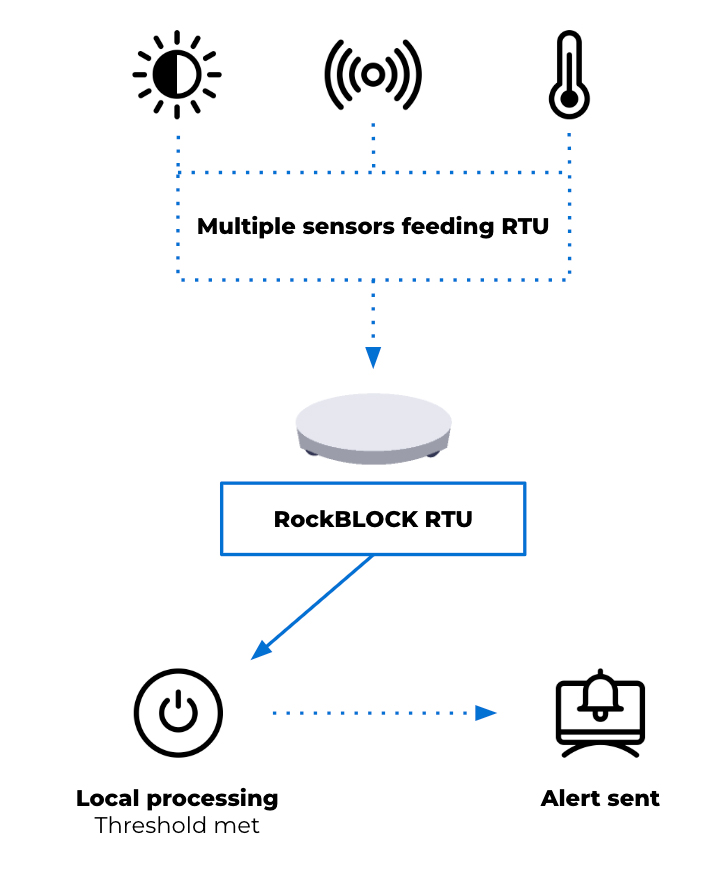

1. Time critical alerts: small messages, high consequence

For alert generation, the desired output may be a critical message triggered by defined thresholds or rules. When conditions are met, an alert can be generated at the device level and transmitted as a short message. This reporting by exception approach reduces dependence on continuous connectivity and helps keep satellite airtime costs to a minimum. Alerts are triggered by local conditions and transmitted when a predefined perimeter is breached.

This isn’t to suggest that hazard monitoring is simple. Rather, some parts of the system still depend on very small, high priority messages: a threshold has been crossed, a device has changed state, or an alarm needs to be raised.

Devices such as RockBLOCK RTU are designed for this type of integration and event driven monitoring. Supporting multiple sensor inputs and enabling local data batching at the edge, the RTU allows data output to remain minimal in size but critical in importance.

This reflects the same principle seen in the tsunami early warning system mentioned earlier, where the priority is ensuring that critical signals can be generated and transmitted under constrained conditions. The RTU also offers sensing, data logging and action on basic threshold triggers. It’s not designed for high level data processing, but it can play an important role in raising an alarm, warning a community, and ensuring that the message gets through.

2. Telemetry: maintaining visibility between events

Alerting is only one layer of a monitoring system. Beyond emergency messages, earthquake and landslide monitoring systems also require ongoing visibility into environmental conditions and system status. Telemetry requirements may include periodic sensor readings, device diagnostics, system health information, and environmental trends over time. This data supports the interpretation of conditions leading up to and following an event. It can also be used to validate system performance and support operational decision making.

Here, architectural decisions often depend on project cost, power budget and the frequency of transmission. Compared with short alert messages, telemetry may require greater data capacity and more regular communication. It remains structured and predictable, but introduces additional considerations around bandwidth and power usage.

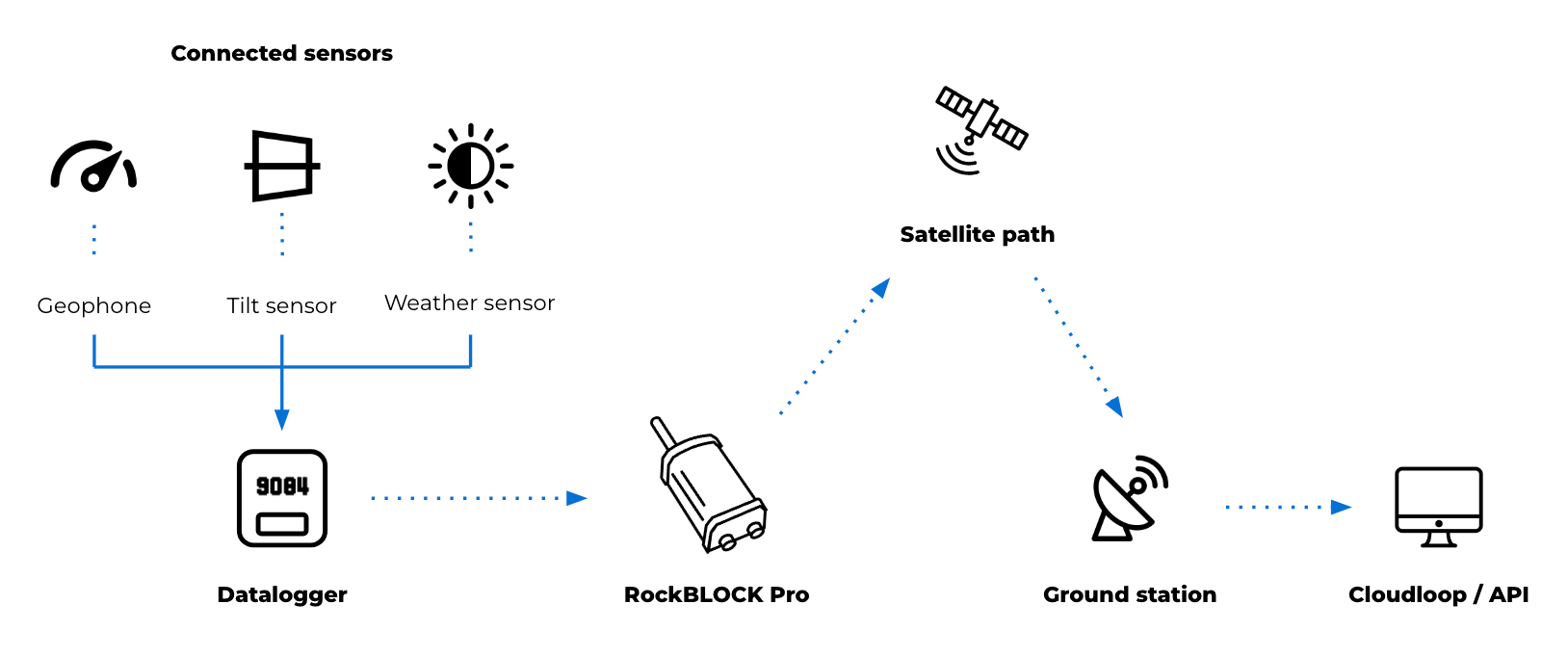

For these purposes, devices operating over services such as Iridium Messaging Transport (IMT) may support this type of data flow. RockBLOCK Pro delivers faster throughput and enables larger payloads than SBD, supporting aggregated sensor data, images and audio clips up to 100kB. This provides more flexible data transmission patterns compared to low bandwidth messaging.

As an IP66 rated terminal, RockBLOCK Pro has a rugged design with a built in Iridium Certus antenna. Its combination of GNSS and serial interfaces (such as RS232/RS485) allows it to integrate with external systems or data sources, acting as a communications layer for structured telemetry and providing a means to transmit aggregated or processed seismology and landslide data. This may include:

- Ground movement sensors such as geophones and accelerometers

- Tilt and deformation sensors for slope and structural monitoring

- Pressure and moisture sensors for groundwater and subsurface conditions

- Threshold-based triggers such as seismic switches for alert activation

- Environmental sensors including rainfall and wind

- Serial connected instruments using RS485 or RS232

- USB field access for configuration, data retrieval and maintenance.

The introduction of RockBLOCK Pro for backhaul or resilience provides additional monitoring capability and a significant increase in capacity to support a wider remote natural hazard monitoring system.

3. Research and AI workloads: when raw data is too large to send continuously

As monitoring systems expand to support research and model development, data requirements extend beyond alerts and telemetry. These datasets may include high resolution sensor data over extended periods, multi sensor correlations across locations, and inputs used for training and validating analytical models. This type of data is higher in volume and less time sensitive, but still requires a reliable path from remote environments.

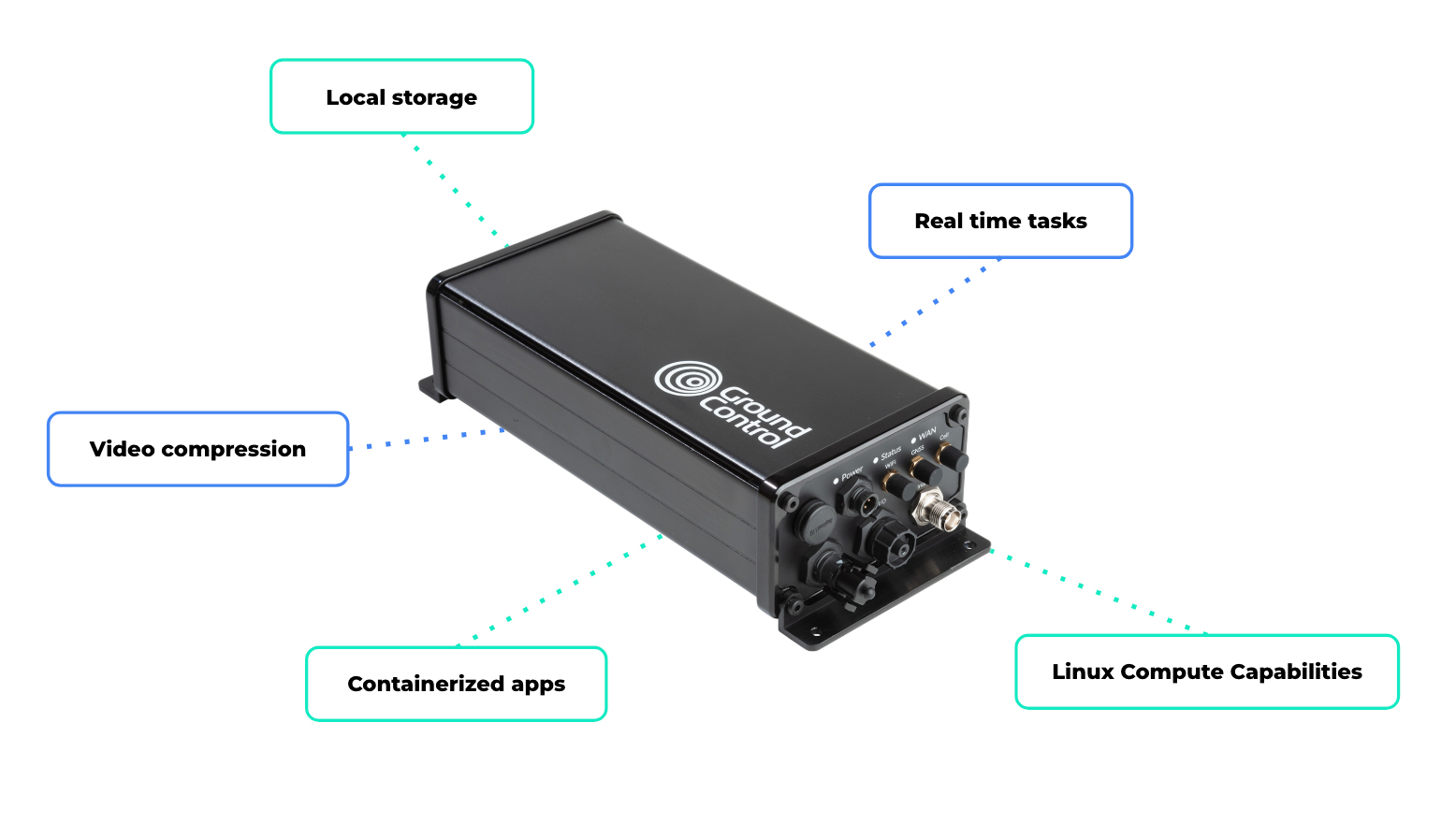

Systems often store or buffer data locally and transmit it based on available bandwidth, power and connectivity. This may involve scheduled transfers, event-based uploads, or selective transmission of processed data. Devices such as RockREMOTE Rugged support this role by combining higher throughput connectivity with embedded compute capability. They act as an interface between field deployments and cloud-based systems, enabling data handling, filtering and integration with external platforms.

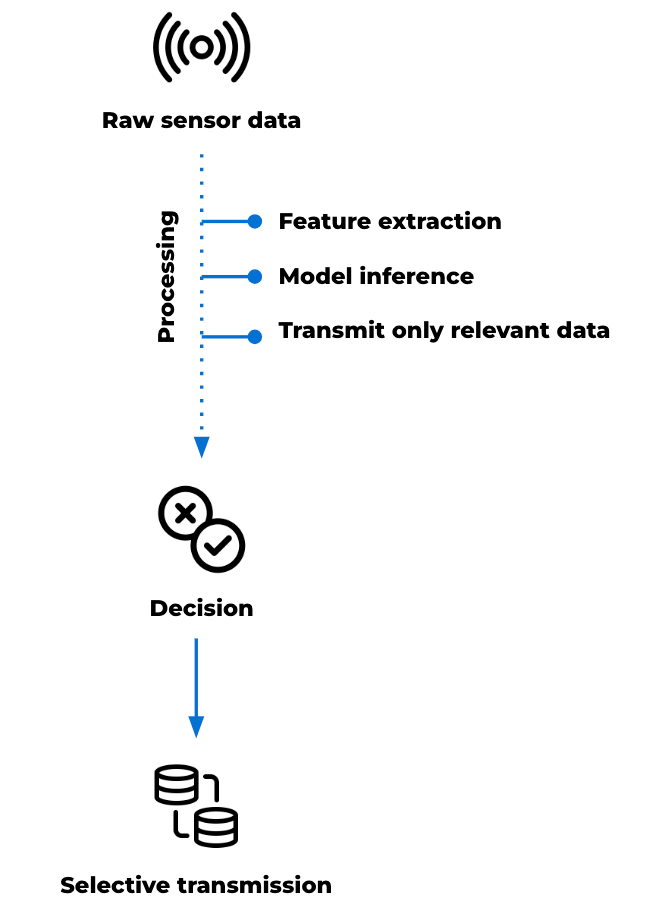

At this point, the device’s role isn’t limited to communication; it becomes part of the data management architecture. High frequency sensing, particularly in seismic monitoring, can generate more data than can be transmitted continuously over constrained links. Local processing allows this data to be reduced before transmission. Tasks such as filtering, segmentation and feature extraction can be applied at the point of collection, allowing derived parameters to be transmitted in place of raw data.

This preserves the characteristics needed for analysis while maintaining manageable data volumes. Edge computing can also support lightweight analytical models at the edge, depending on the application deployed.

These models, typically trained on historical datasets, can be applied to live data streams to identify signals of interest. This may include distinguishing between background activity and patterns associated with instability or early seismic events. In these scenarios, transmission is based on relevance rather than volume. Data is prioritized according to its analytical value, rather than transmitted continuously.

RockREMOTE Rugged’s Linux-based environment supports custom applications, enabling user-defined data processing and integration and allowing custom processing pipelines or models to be deployed at the edge. Local storage enables data retention where continuous transmission is not practical, while connectivity over Iridium Certus 100 and cellular networks provides a path for data to move to cloud environments when required.

This supports a range of system behaviors, including:

- High frequency data capture with selective transmission

- Local feature extraction to reduce bandwidth requirements

- Model inference at the edge to support early interpretation

- Buffered storage for later retrieval or batch upload

- Video compression before transmission

- Running real time tasks such as filtering, segmentation, or frequency-domain analysis

- Saving high resolution data locally

- Transmitting exception summaries via satellite.

In earthquake and landslide monitoring, the value of this compute power is in its ability to manage complex data flows locally, reduce unnecessary transmission, and support more autonomous system behaviour through locally defined logic or processing in remote environments.

Satellite IoT as part of the monitoring infrastructure

The evolution of landslide and earthquake monitoring systems is shaped by two parallel developments. The range of observable data is increasing through multi-sensor integration, remote sensing and advanced analysis. At the same time, environmental and operational constraints remain consistent. Monitoring sites are often remote, power limited and difficult to access. Communications infrastructure may be unavailable, unreliable or exposed to the same hazards the system is designed to monitor.

Within this context, connectivity supports the movement of different types of data, from time critical alerts to larger datasets used for analysis. Satellite enabled devices extend coverage and enable communication where other infrastructure is limited. Different device types support different roles within the system. Some are suited to edge-based alert generation. Others support structured telemetry and system visibility. Higher capacity devices with embedded compute power can help process, prioritize and transmit larger datasets for research and AI-assisted monitoring.

The most effective system design starts with the data: its urgency, size, frequency and operational value. From there, satellite IoT can be used as a resilient layer within a wider monitoring architecture.

Building the connectivity layer for modern monitoring systems

Whether you’re building threshold based alerts, expanding telemetry, or exploring edge processing for AI driven monitoring, the challenge is the same: getting the right data through, at the right time, under real world constraints.

We work with system integrators, scientists, and engineers, to design connectivity architectures that balance power, cost, data volume, and resilience across satellite and hybrid networks.

Complete the form or email hello@groundcontrol.com, and we’ll be in touch within one working day.