For teams managing remote conservation areas, wildfire risk is becoming harder to predict and harder to plan for, even in regions that have not historically been considered fire prone. Rising temperatures and longer dry periods are changing how fire behaves across forests, reserves, and protected land. One recent study found that 83.9% of wildfire-vulnerable species are now exposed to increased fire risk, with fire seasons projected to more than double in some regions. This isn’t limited to traditionally fire-prone zones. Fire seasons are lengthening, and fire behaviour is becoming less predictable and harder to contain.

You may already be seeing the signs: vegetation staying dry for longer, water sources becoming less reliable, and more ignition points across a wider area. For smaller teams covering large territories, this shifts wildfire from a seasonal concern to an ongoing operational risk.

Why remote sites are exposed

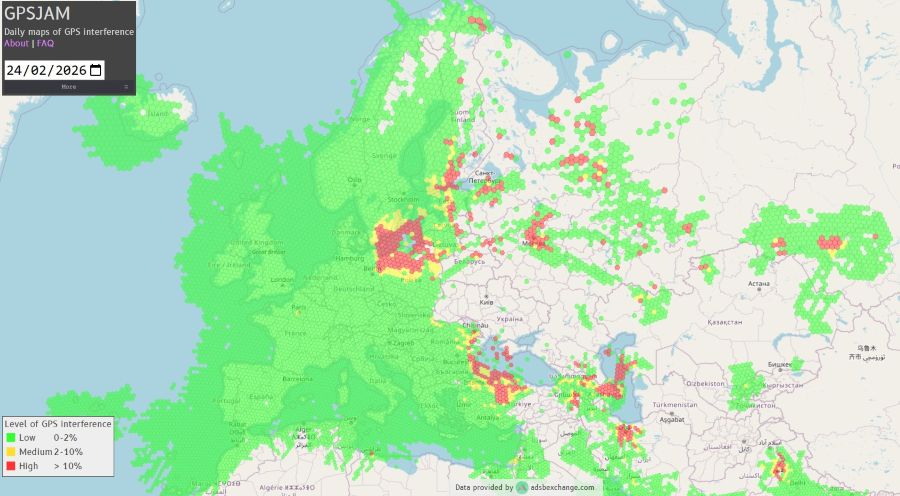

Remote conservation areas come with structural challenges that make wildfire response harder. Teams often work across large, varied terrain with limited visibility, minimal infrastructure, and inconsistent or absent cellular coverage. Ranger teams are small, but the areas they cover are not.

When a fire starts, response depends heavily on what you can detect and communicate locally. In Madagascar, one protected reserve lost around a third of its forest in a single year due to wildfire pressure linked to rising temperatures and prolonged dry conditions. Events like this highlight how quickly impact scales when detection or response is delayed.

Distance from emergency services also increases pressure on conservation teams, while budget constraints shape what can realistically be deployed. And when habitats support endangered species, even a single event can cause long-term ecological damage.

What monitoring looks like today

Wildfire monitoring is typically shaped by scale and budget. In practice, teams may rely on ranger patrols and visual observation, weather tracking such as temperature, wind, and humidity, external satellite data sources, camera systems, or WAN sensor deployments.

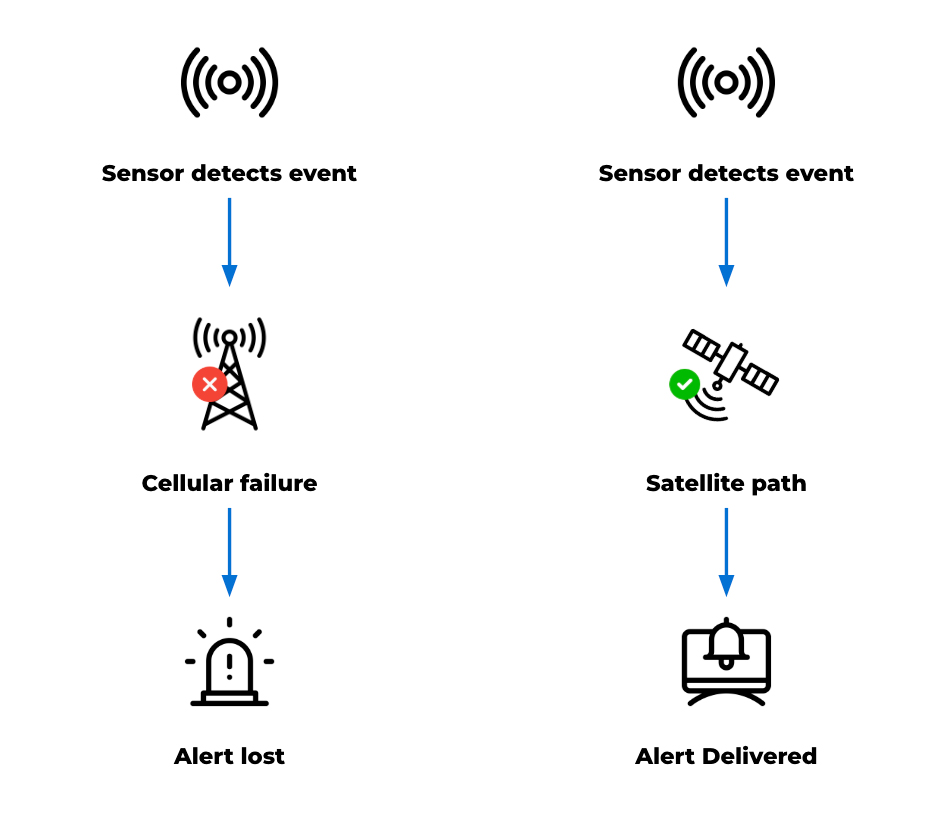

These tools are valuable. They provide signals, support situational awareness, and in some cases trigger real time responses. However, while the capability to monitor exists, the limitations of existing approaches are largely practical. Detection may depend on chance observation and can come too late, while reliance on cellular communication can fail under the stress of an unanticipated emergency that disrupts the very channels needed for response.

Even when sensors are deployed, reliably transmitting alerts can be difficult. Data may be captured but not delivered effectively, creating a gap between detection and awareness. Constraints like these become more visible as wildfire risk increases.

Why time is of the essence

Delays in detecting early-stage fires reduce the available window for intervention. In fast-moving conditions, even short delays can significantly increase the scale of an incident. At the same time, teams may be distributed across the landscape, and lone workers need reliable communication for both safety and coordination.

The 2024 Jasper National Park wildfire shows how quickly situations can escalate, even in well-managed environments. The event led to around 25,000 evacuations and the loss of hundreds of structures. In more remote settings, with fewer resources, the margin for delay is even smaller.

Detection works. Delivery is the problem

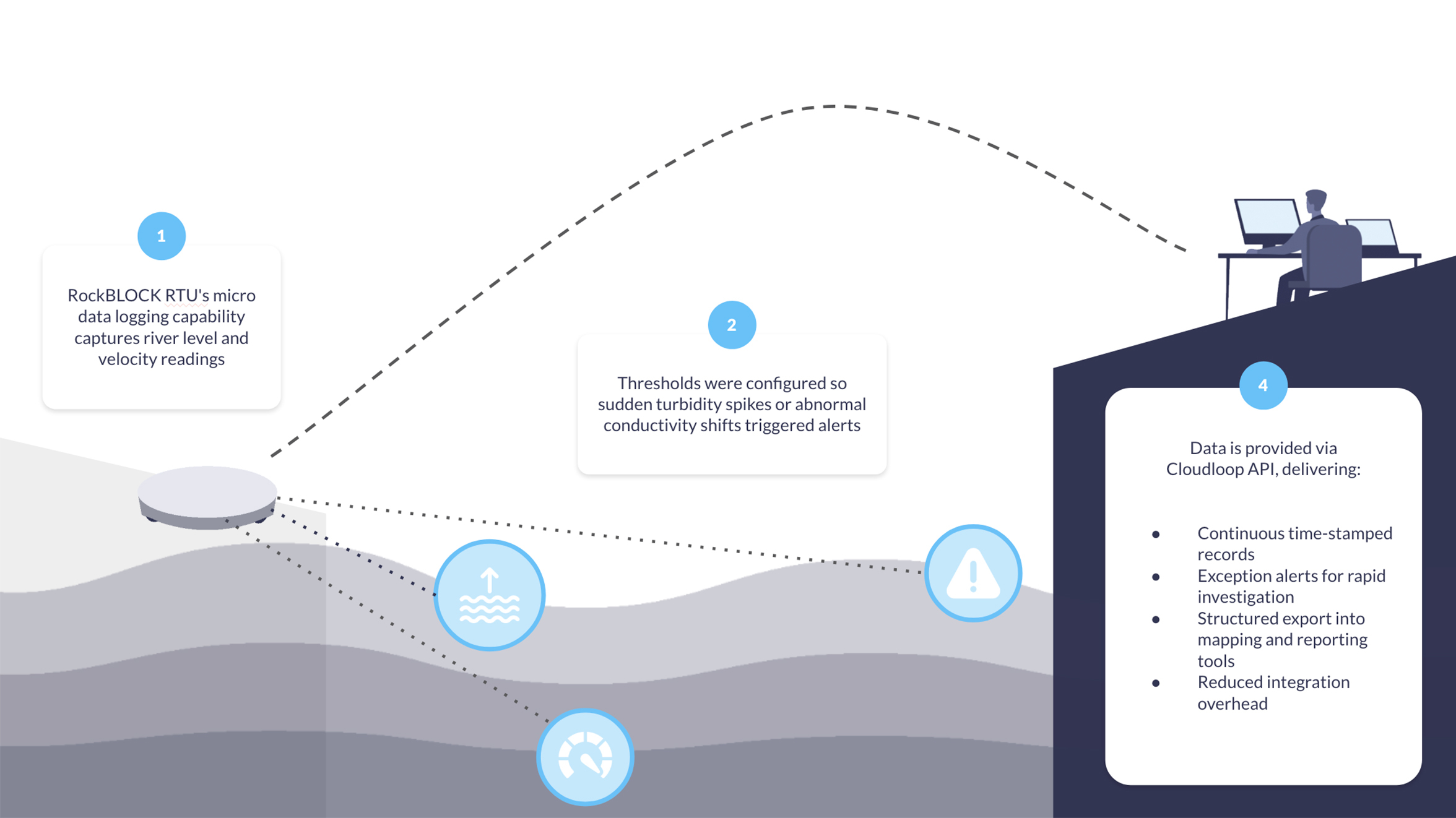

Deploying sensors to monitor temperature, humidity, and smoke across high risk areas can provide meaningful early signals from systems that run for long periods on minimal power.

The FireFly project in Northern Thailand is a strong example. Distributed sensor nodes monitored forest conditions and identified early fire risk, with UAVs used to confirm ignition points. The system was designed specifically for remote, low infrastructure environments with cost in mind. But field observations highlighted a recurring issue: antenna placement and enclosure design affected system reliability, while dense vegetation and uneven terrain disrupted connectivity. Environmental conditions directly influenced whether data could leave the site. In other words, detection worked, but alert delivery didn’t always follow.

The same pattern appears in field-based wildfire and peatland monitoring projects more broadly. Sensors can detect early stage fire risk, land degradation, or changing environmental conditions, but the value of those systems depends on whether data can leave the site quickly and reliably. Studies of IoT wildfire detection systems highlight communication reliability, limited cellular coverage, packet loss, latency, and energy consumption as practical deployment challenges in remote environments. The communication channel, or “last mile” connection, is therefore critical to whether early detection becomes timely awareness.

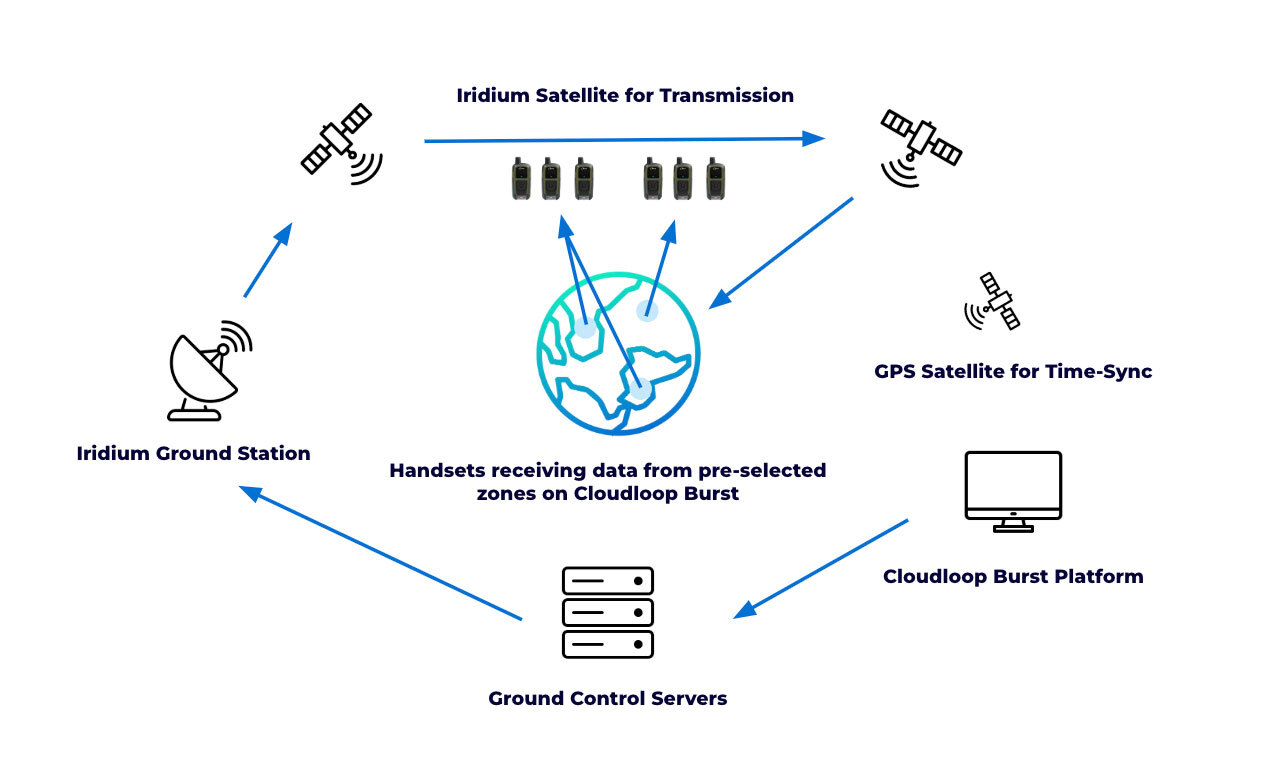

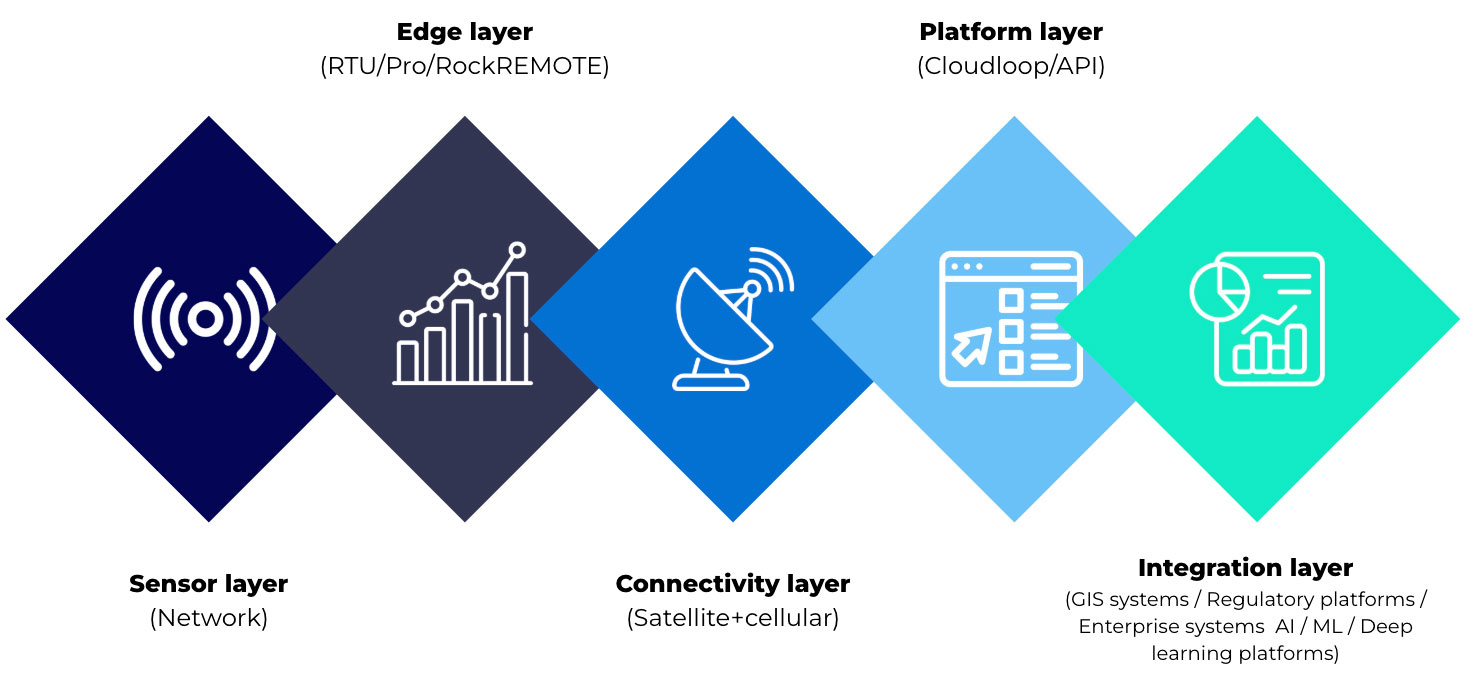

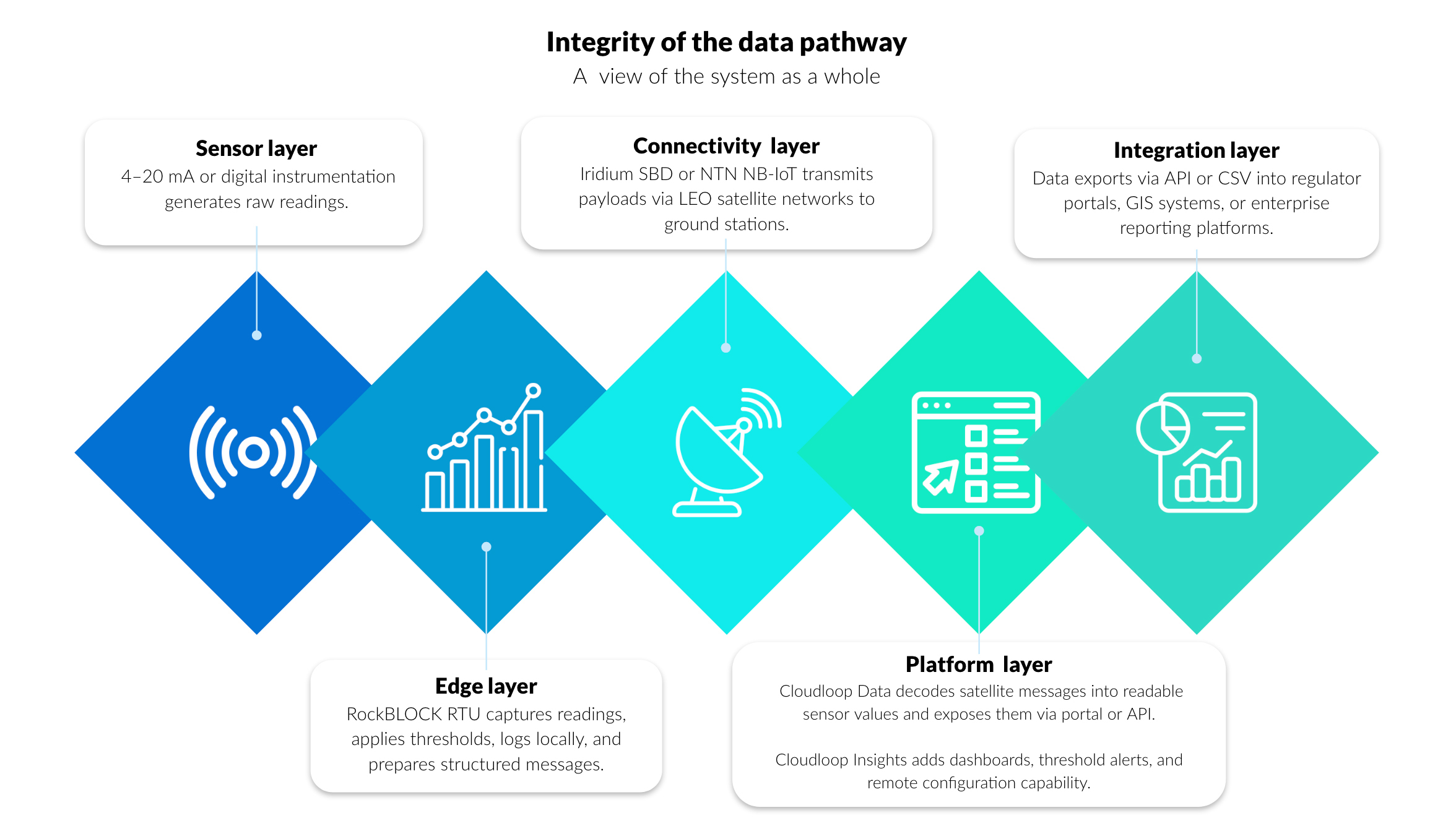

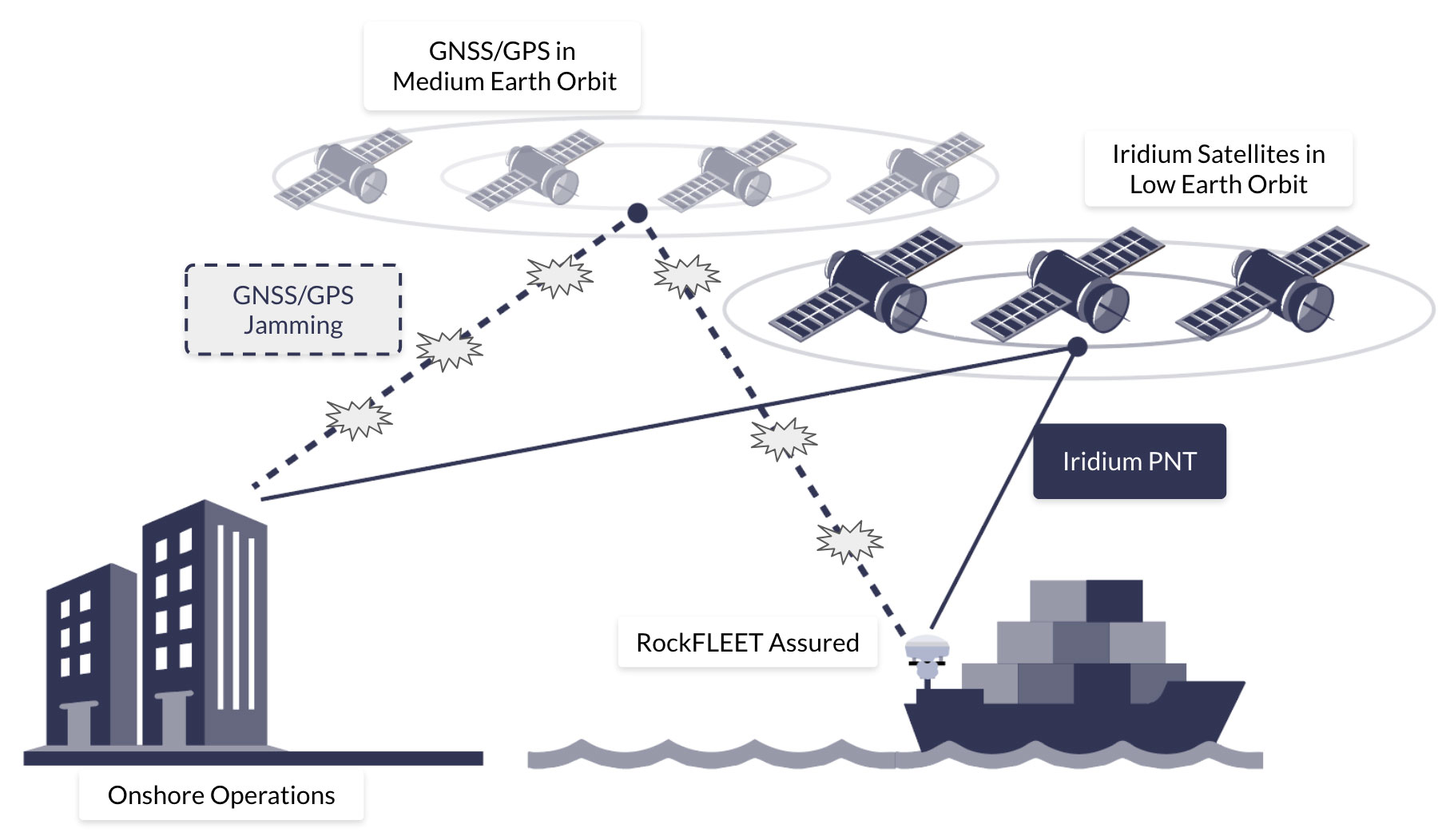

Solving the last mile with Satellite IoT

In remote conservation areas, terrain, vegetation, and distance from infrastructure all affect signal performance. Systems that depend on terrestrial networks introduce gaps and points of failure. Satellite IoT removes that dependency.

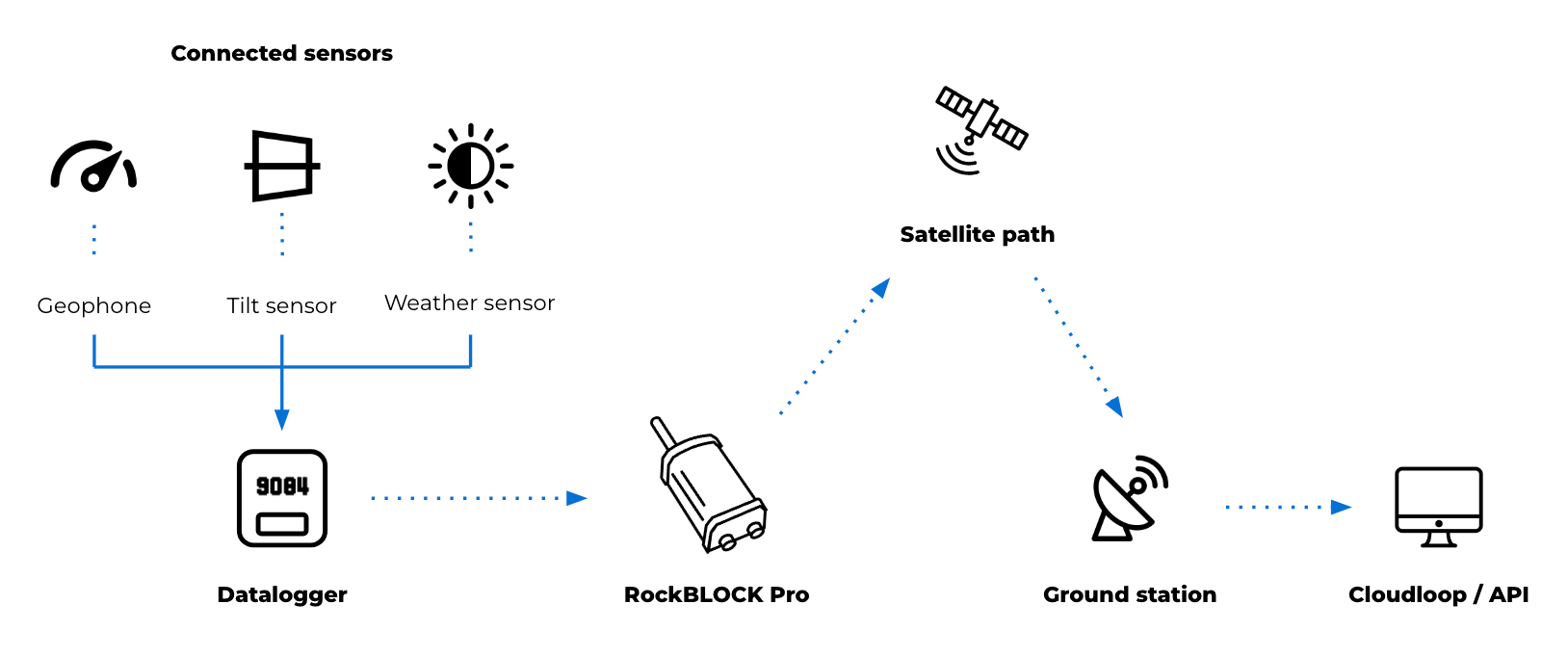

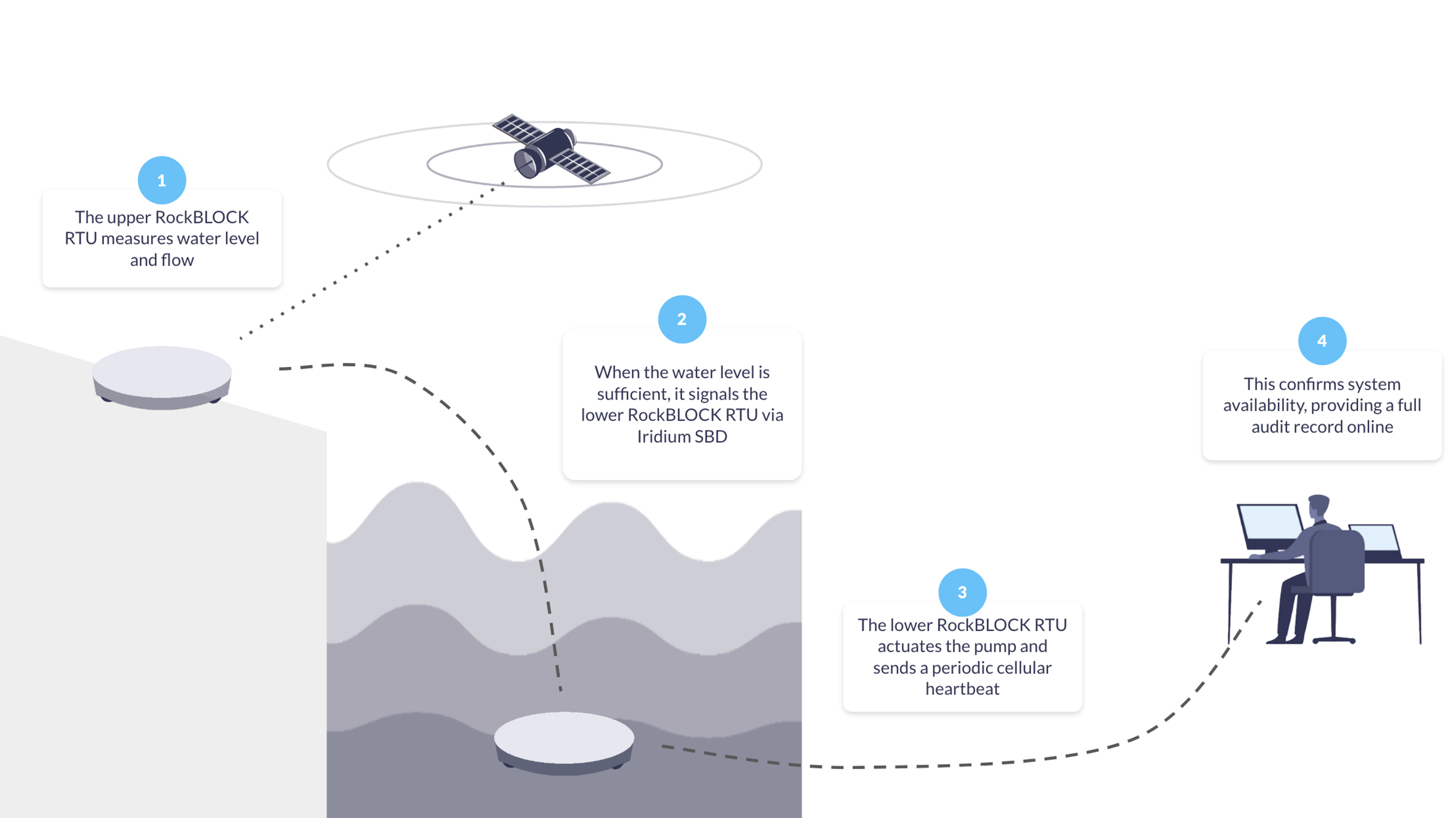

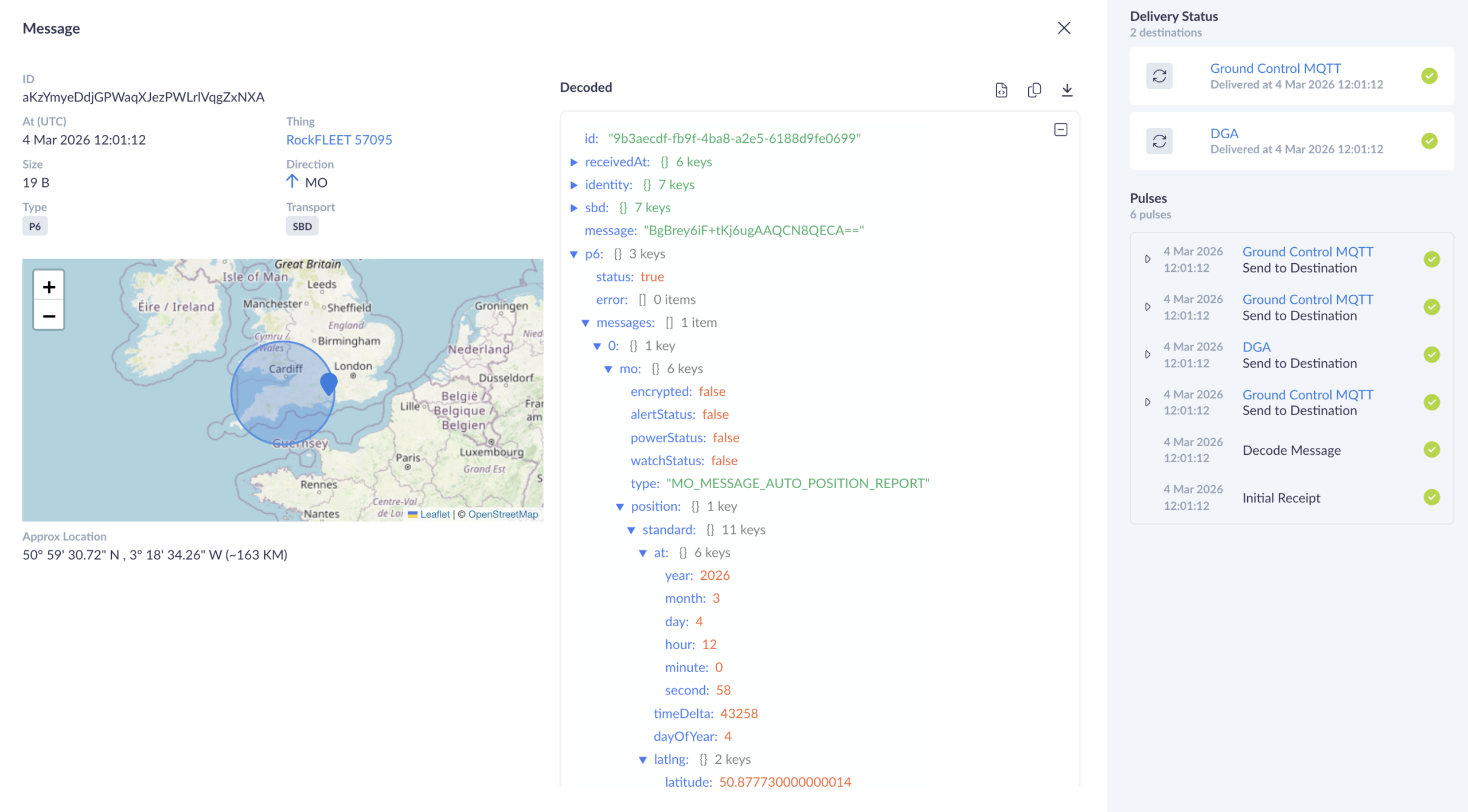

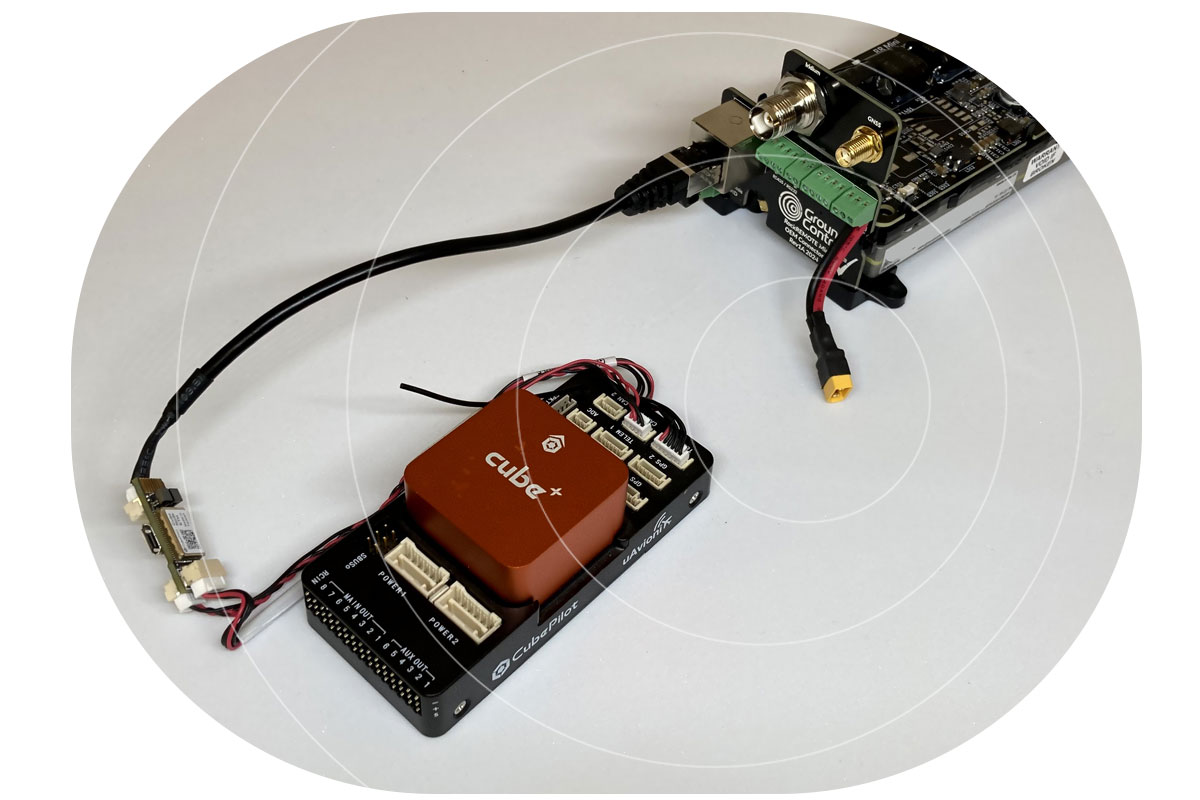

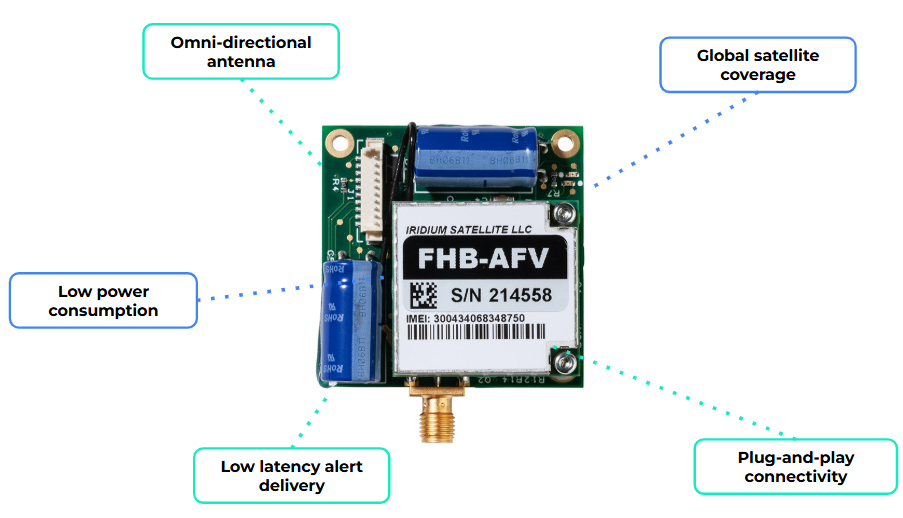

By integrating a compact modem such as RockBLOCK 9603, a sensor monitoring system can send data over the Iridium satellite network with no reliance on local infrastructure. That creates a direct path from your sensor to you, without relying on local coverage.

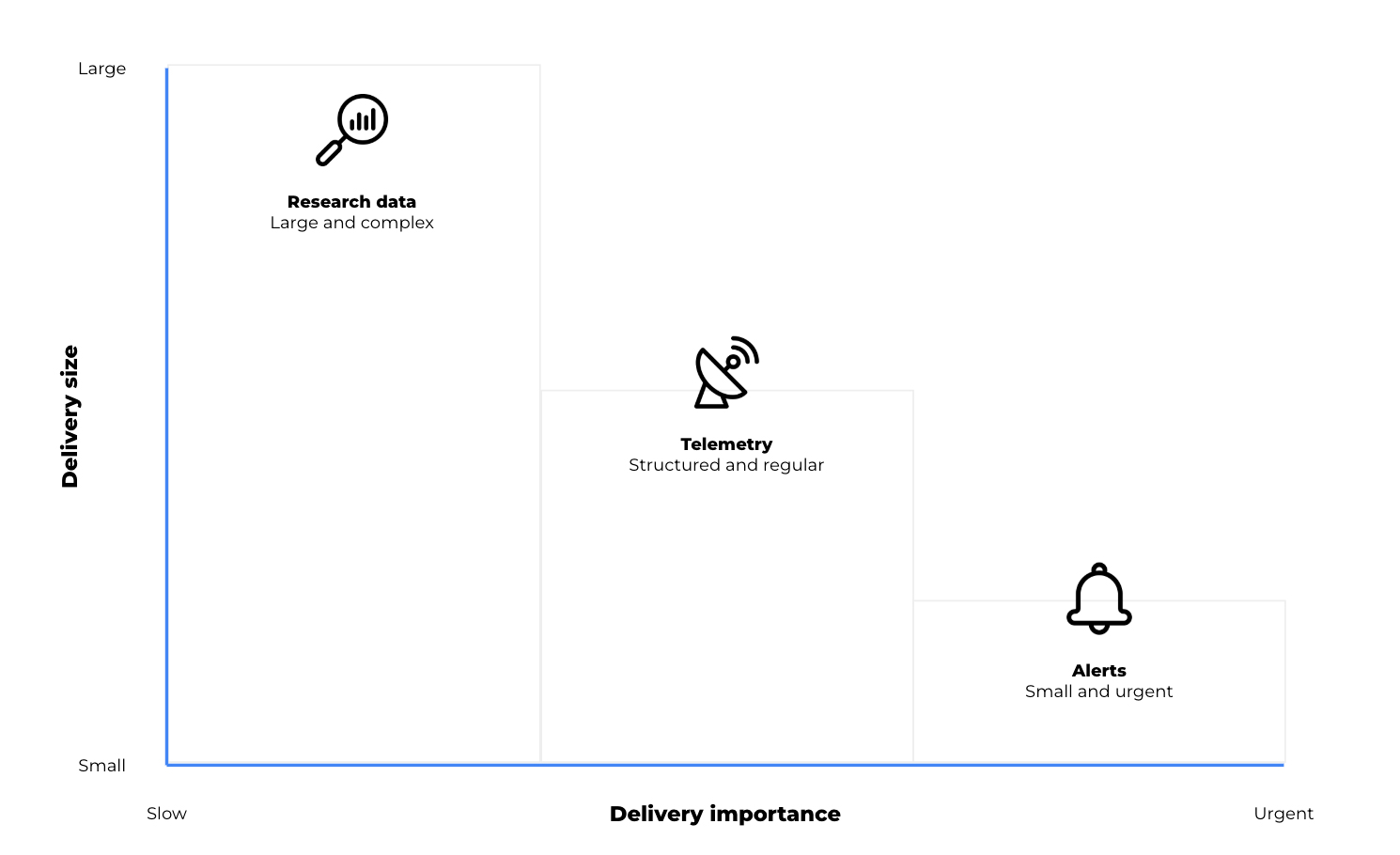

In practice, the workflow is simple:

- Sensors monitor defined environmental thresholds

- Local logic determines when conditions require attention

- A short alert message is generated

- That message is transmitted via satellite to your team.

Messages remain small, and transmission can be event driven, supporting low power operation and long deployment lifetimes. For conservation teams, this enables a focused deployment model: a limited number of sensors placed in high risk areas, with a communication path that remains consistently available.

When a fire is detected, the system alert is delivered, reliably, and in time to act.

When you need more field capability

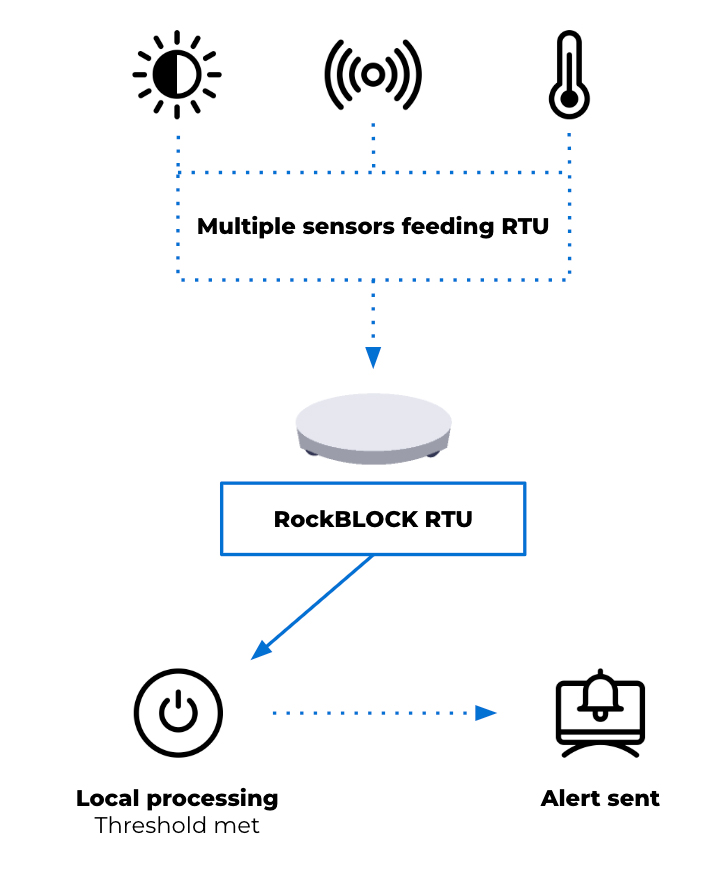

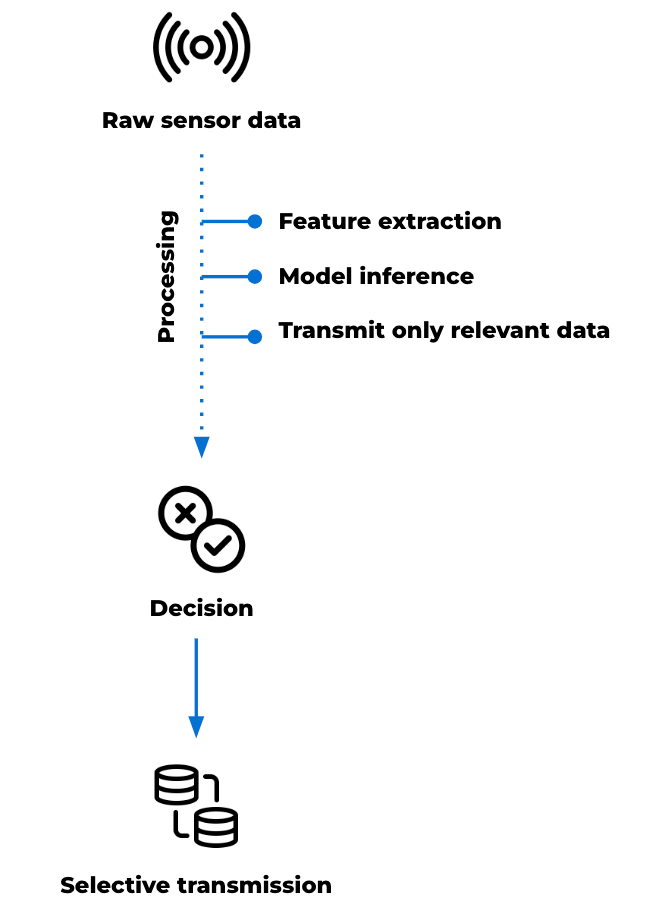

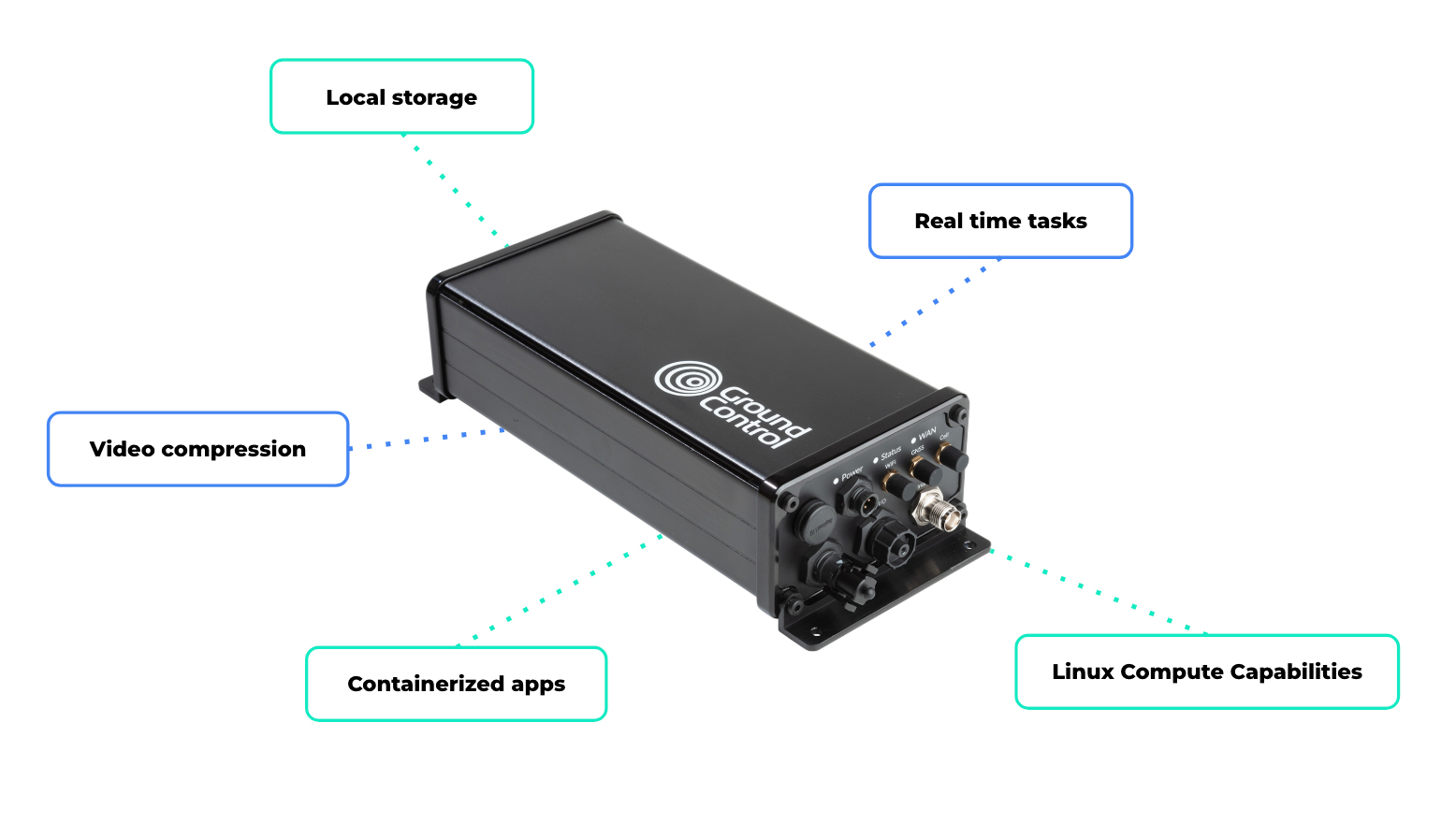

For some deployments, a compact satellite modem may be enough to connect an existing sensor system. For others, the monitoring setup needs to handle multiple sensor inputs, apply logic locally, and decide when an alert should be sent.

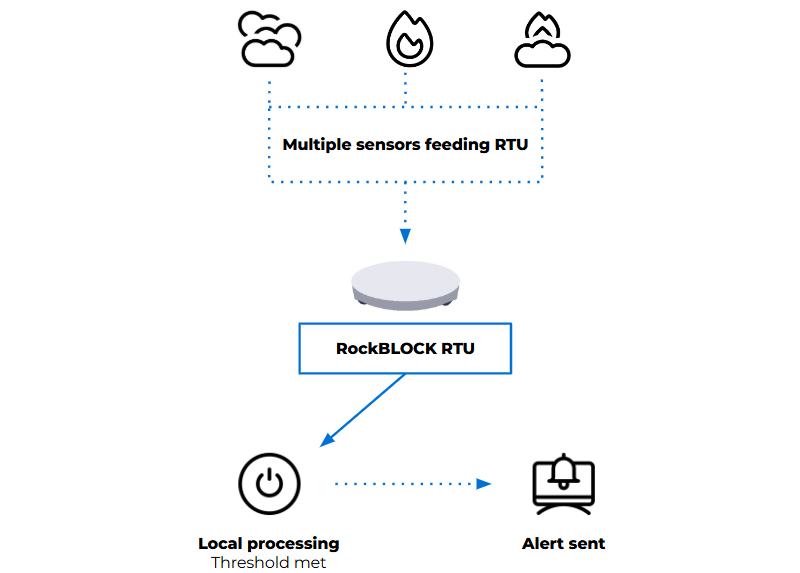

That’s where a device such as RockBLOCK RTU can be useful. It aggregates sensor inputs from a range of sources and applies threshold logic in the field. When defined conditions are met, it generates alerts and transmits telemetry via its satellite connection.

This approach reduces dependency on continuous connectivity and avoids the need to send raw data elsewhere for processing. Decisions are made where the data is generated, according to the conditions being monitored. Reducing unnecessary data transmission also helps keep satellite costs to a minimum.

In practice, that gives you:

- One unit integrating multiple sensors

- Configurable thresholds based on your environment

- Event-based alerting instead of continuous transmission

- Context included with each alert.

The right approach depends on the scale of the site, the number of sensors required, and how much processing needs to happen in the field.

Designing for increased wildfire risk

Effective wildfire monitoring systems prioritize early detection and dependable alert delivery. They need to operate with limited power, minimal infrastructure, and changing environmental conditions, while remaining simple enough to deploy and maintain and reliable enough to trust when something happens.

Recent events reinforce the need for this approach. Reliability, simplicity, and clear information delivered to the right people at the right time can protect lives and support more effective response.

Facing a remote monitoring or alerting challenge?

If your team needs to detect environmental risk, transmit alerts from areas without reliable cellular coverage, or keep remote systems connected, we can help you explore the right satellite IoT approach.

Complete the form, or email hello@groundcontrol.com to discuss your use case with our team – we’ll reply within one working day.